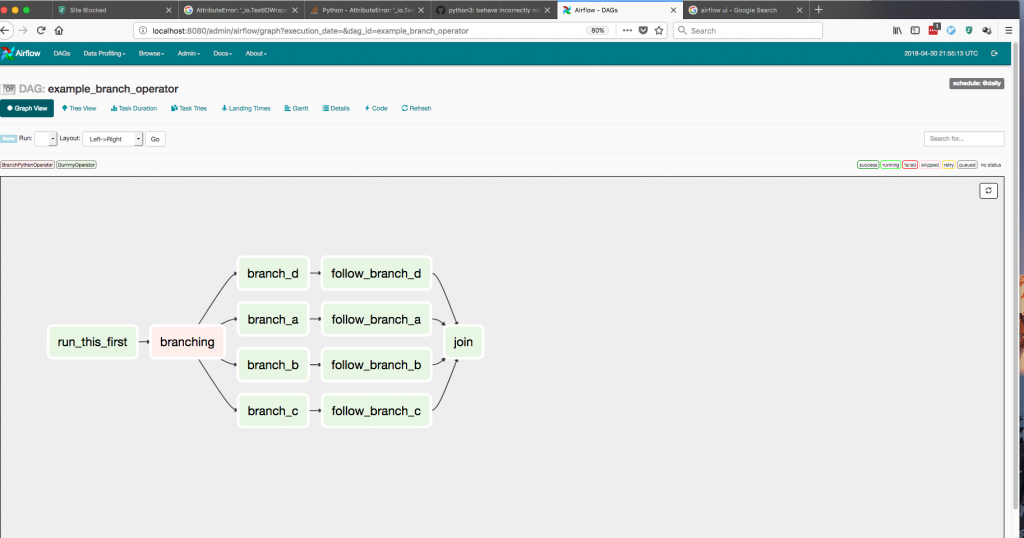

Google support is void installing software into an virtual environment bypassing security check.Īnd that is how we ended up with Plan C. This Again the answer is simple, security. Why stop using this option? Running dbt job in virtual environment created in Composer is causing security threats. The virtual environment is destroyed when dbt job finished. Current implementation is to create a temporary virtual environment with dbt installed for each dbt job. We can trigger the dbt DAG via pub/sub message or schedule it. The choice bypassed all the issues that Cloud Scheduler had. And depending on the version of Composer the conflicts vary. Direct installation of dbt in Composer causes failure. Why run in a virtual environment and not install as a PyPI package? The dependencies of dbt PyPI package have conflicts with Composer’s. Plan B… Composer using a virtual environment This works well if you don’t exceed the limitation of Cloud Scheduler. This works well if you don’t need any smarts in the scheduling process, such as checking that the previous load has completed before you start another load. This works well if you have a set schedule to run your dbt models but can’t trigger loads based non-dbt ingestion.

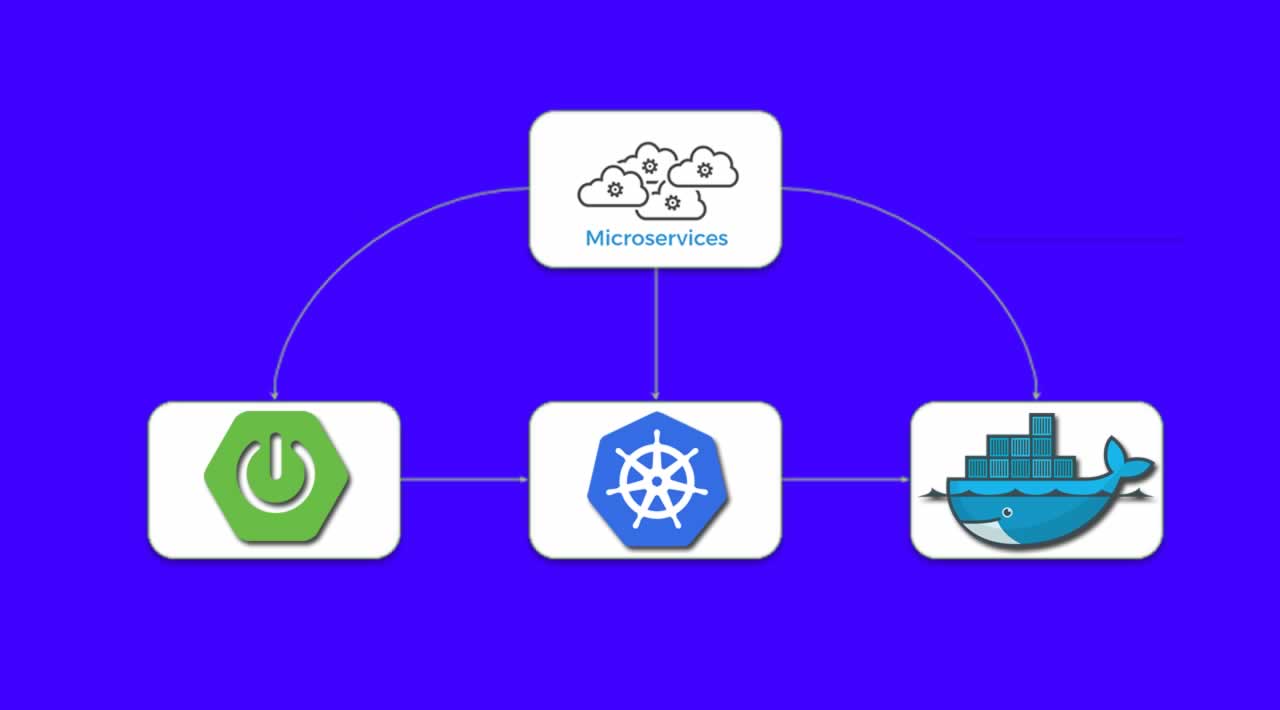

This works well if you have no idea about Composer or Airflow. This was our first choice and we have a few models running using this strategy. I’d like to explain how we went about getting the Airflow using a KubernetesPodOperator choice working and also give a brief explanation as to why. We’ve been using dbt for a while now and have had a few deployment choices.

Quick disclaimer: We use GCP and the solution is based on GCP only

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed